How it works

When the TTS node produces aFInworldData_TTSOutput, it includes a Timestamps array. Each entry is an FInworldAudioChunkTimestamp — a word with its start/end times and a Phones array of FInworldPhoneSpan entries. Each span carries the phoneme symbol, its viseme category, and its timestamp.

At playback time, UInworldVoiceAudioComponent fires OnVoiceAudioPlayback every tick with the current FInworldVoiceAudioPlaybackInfo (elapsed duration) and the cached phone spans. You pass these into a BFL function to get per-viseme or per-phoneme blend weights, then feed those weights into the Inworld Viseme AnimGraph node.

Data types

FInworldData_TTSOutput

The output of a TTS node. Contains everything needed for playback and lip-sync.| Field | Type | Description |

|---|---|---|

Audio | FInworldData_Audio | The synthesized PCM audio |

Text | FString | The text that was synthesized |

Timestamps | TArray<FInworldAudioChunkTimestamp> | Per-word timing and phonetic breakdown |

FInworldAudioChunkTimestamp

One word in the utterance, with its time range and phone-level detail.| Field | Type | Description |

|---|---|---|

Token | FString | The word text |

StartTime | float | Word start time in seconds |

EndTime | float | Word end time in seconds |

Phones | TArray<FInworldPhoneSpan> | Per-phoneme breakdown for this word |

bIsPartial | bool | true if this word may still change (streaming update) |

FInworldPhoneSpan

One phoneme within a word. The source of all lip-sync timing.| Field | Type | Description |

|---|---|---|

Phoneme | FString | IPA phoneme symbol (e.g. "b", "æ") |

Viseme | FString | Viseme category string (e.g. "BMP", "AEI") |

Timestamp | float | Time in seconds when this phoneme sounds |

Duration | float | Duration of this phoneme in seconds |

WordIndexAtAudioChunk | int32 | Index of the parent word in Timestamps |

FInworldVisemeBlends

Blend weights for the 12 Inworld viseme categories, each in[0, 1]. STOP represents silence/rest.

| Field | Sounds |

|---|---|

BMP | b, m, p |

FV | f, v |

TH | th |

CDGKNSTXYZ | c, d, g, k, n, s, t, x, y, z |

CHJSH | ch, j, sh |

L | l |

R | r |

QW | q, w |

AEI | a, e, i |

EE | ee |

O | o |

U | u |

STOP | silence / rest (defaults to 1.0) |

FInworldVoiceAudioPlaybackInfo

Playback timing provided each tick byOnVoiceAudioPlayback. Pass this to BFL functions to get the correct viseme weights for the current frame.

| Field | Type | Description |

|---|---|---|

Utterance.PlayedDuration | float | Seconds elapsed in the current utterance — used by BFL functions to look up the active phone span |

Utterance.TotalDuration | float | Total duration of the utterance |

Utterance.PlayedPercent | float | Playback progress [0, 1] |

Interaction.PlayedDuration | float | Seconds elapsed across the whole interaction |

UInworldVoiceAudioComponent

TheUInworldVoiceAudioComponent handles TTS audio playback and is the main source of per-frame lip-sync data.

Methods

| Method | Description |

|---|---|

QueueVoice(FInworldData_DataStream_TTSOutput) | Queue a TTS stream chunk for playback |

Interrupt() | Stop playback immediately and clear the queue |

GetCurrentPhoneSpans() | Returns the cached TArray<FInworldPhoneSpan> for the current utterance — use with GetVisemeBlends |

Events

| Event | Signature | Description |

|---|---|---|

OnVoiceAudioStart | (Component, FInworldData_TTSOutput, bInteractionStart) | Fired when a new utterance begins |

OnVoiceAudioPlayback | (Component, PlaybackInfo, FInworldData_TTSOutput, PhoneSpans) | Fired every tick during playback — primary hook for lip-sync |

OnVoiceAudioUpdated | (Component, FInworldData_TTSOutput) | Fired when TTS output data is updated |

OnVoiceAudioComplete | (Component, FInworldData_TTSOutput, bInteractionEnd) | Fired when an utterance finishes normally |

OnVoiceAudioInterrupt | (Component, FInworldData_TTSOutput) | Fired when playback is interrupted |

OnVoiceAudioPlayback is the recommended binding point for lip-sync — it provides PlaybackInfo and pre-built PhoneSpans in one call.

Blueprint Function Library — Viseme & Phoneme functions

All functions are onUInworldBlueprintFunctionLibrary.

| Function | Category | Description |

|---|---|---|

BuildPhoneSpansFromTTSOutput(TTSOutput, OutPhoneSpans) | Viseme | Flattens Timestamps[].Phones[] into a single array. Call once per utterance and cache the result. |

GetVisemeBlends(PlaybackInfo, PhoneSpans) | Viseme | Returns FInworldVisemeBlends for the current playback time using a cached span array. Recommended for performance. |

GetVisemeBlendsTTS(PlaybackInfo, TTSOutput) | Viseme | Same as above but builds spans internally from TTSOutput each call. Convenient, but less efficient. |

GetPhonemeBlends(PlaybackInfo, PhoneSpans) | Phoneme | Returns FInworldPhonemeBlends (raw IPA phoneme weights) from a cached span array. |

GetPhonemeBlendsTTS(PlaybackInfo, TTSOutput) | Phoneme | Same as above but reads directly from TTSOutput. |

GetCurrentWord(PlaybackInfo, TTSOutput) | Playback | Returns FInworldAudioVoiceWord — the word currently being spoken (Word, WordIndex, TotalWordCount). |

OnVoiceAudioPlayback, cache PhoneSpans on OnVoiceAudioStart, then call GetVisemeBlends(PlaybackInfo, CachedPhoneSpans) each tick. This avoids re-flattening the timestamp array every frame.

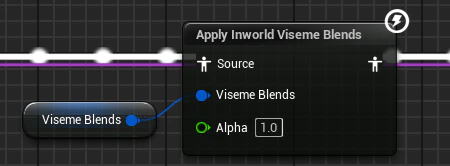

Inworld Viseme AnimGraph node

UAnimGraphNode_InworldViseme is an Animation Blueprint node that applies morph target curves from viseme blend weights. Bone transforms pass through unchanged — only the curve track (morph targets) is modified.

Properties

| Property | Type | Default | Pin | Description |

|---|---|---|---|---|

Source | FPoseLink | — | Yes | Incoming pose — bones pass through unmodified |

VisemeBlends | FInworldVisemeBlends | — | Yes | Per-viseme weights from the BFL functions, updated each tick |

VisemeData | UInworldVisemeDataAsset* | — | No | Data asset mapping visemes to morph target curve names and weights |

SmoothingSpeed | float | 12.0 | No | Interpolation speed toward target weights per second. 0 disables smoothing |

Alpha | float | 1.0 | No | Overall blend strength [0, 2]. 0 suppresses lip-sync, 1 is full, 2 doubles morph values |

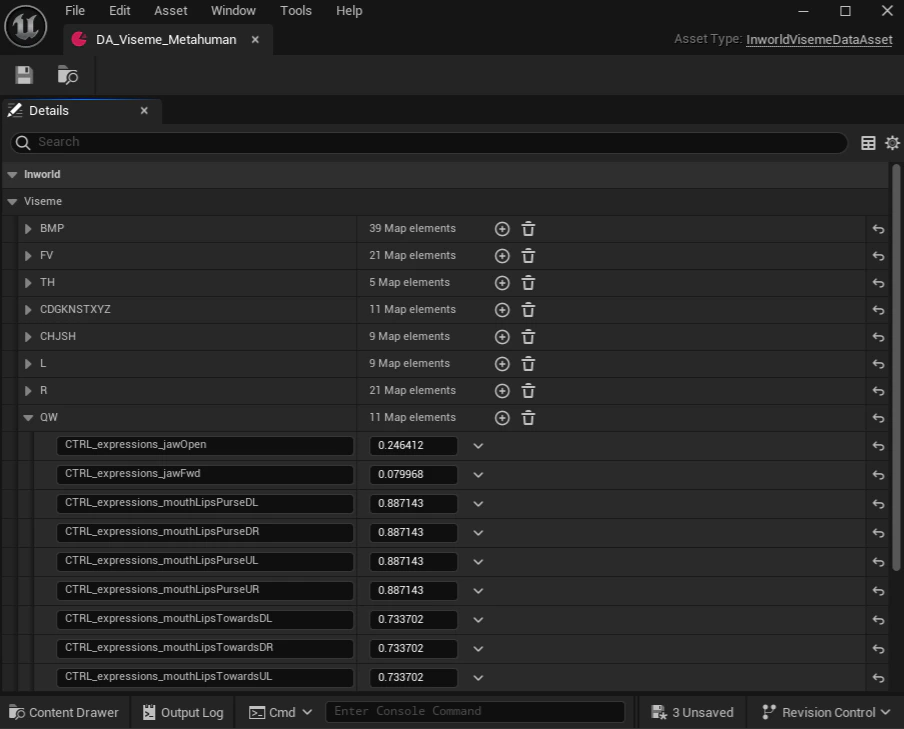

UInworldVisemeDataAsset

AUDataAsset that maps each viseme category to one or more morph target curve name/weight pairs. You create one asset per character rig, then assign it to the VisemeData property on the AnimGraph node.

Each viseme entry is a TMap<FName, float> where the key is the morph target curve name on your Skeletal Mesh and the value is the contribution weight for that viseme.

Supported viseme entries: BMP, FV, TH, CDGKNSTXYZ, CHJSH, L, R, QW, AEI, EE, O, U

(STOP is handled automatically — it does not need an entry in the data asset.)

Setting up a VisemeDataAsset

- In the Content Browser, right-click and choose Miscellaneous > Data Asset

- Select

InworldVisemeDataAssetas the class - Open the asset and for each viseme entry, add the morph target curve names from your Skeletal Mesh and their blend weights

- Assign the asset to the

VisemeDataproperty on yourInworld VisemeAnimGraph node

CTRL_expressions_* curves. Weights are additive — multiple curves per viseme are all applied simultaneously.

Setting up lip-sync in an Animation Blueprint

Add the Inworld Viseme node to your AnimGraph

Open your character’s Animation Blueprint and navigate to the AnimGraph. Search for Inworld Viseme and place the node in your graph, wiring its Source input from your existing pose and its output toward Output Pose.Assign your

UInworldVisemeDataAsset to the Viseme Data property on the node.Create a VisemeBlends variable

Add an

FInworldVisemeBlends variable to the Animation Blueprint. Wire it into the Viseme Blends pin on the Inworld Viseme node. This variable will be updated each tick from the character component.Bind to OnVoiceAudioPlayback

In the character actor’s Blueprint (or on

BeginPlay in the Anim BP), get the UInworldVoiceAudioComponent and bind to OnVoiceAudioPlayback. In the callback, call GetVisemeBlends(PlaybackInfo, PhoneSpans) and store the result into your FInworldVisemeBlends variable using Set Anim Instance Variable or a direct property write.For best performance, also bind to OnVoiceAudioStart and call BuildPhoneSpansFromTTSOutput there to cache the spans array. Then use GetVisemeBlends(PlaybackInfo, CachedPhoneSpans) in OnVoiceAudioPlayback instead of GetVisemeBlendsTTS.