Connect your BigQuery project to export router exposure data for analysis and import your product events to define custom metrics in Portal.Documentation Index

Fetch the complete documentation index at: https://docs.inworld.ai/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

CLI setup generator

Use the generator below to produce thegcloud and bq commands for your setup. It defaults to dataset ID inworld_router_dataset, location US, service account name inworld-sync, and generates only the roles required for the mode you select.

Install and authenticate the Google Cloud CLI and

bq before running these commands. If you prefer the Google Cloud console, use the manual flow in the next section.BigQuery dataset IDs can contain only letters, numbers, and underscores. For dataset location, use a valid BigQuery region or multi-region such as

US, EU, or us-central1.IAM changes can take a few minutes to propagate across Google Cloud. If Portal fails its initial validation right after you grant permissions, wait a few minutes and try again before assuming the setup is incorrect.

Console setup (alternative)

Create a BigQuery dataset

Create a BigQuery dataset in your GCP project to store router data. The CLI generator above defaults to dataset ID

inworld_router_dataset in location US. If you only plan to import events and already have a source dataset, you can skip creating a new dataset.Create a service account

Go to the Service Accounts page in your GCP project and create a new service account for the Inworld sync job. On the permissions page, grant it the following project-level roles:

- BigQuery Job User (

roles/bigquery.jobUser) — allows the account to run BigQuery jobs - BigQuery Read Session User (

roles/bigquery.readSessionUser) — required for importing events via the BigQuery Storage Read API. You can skip this role if you only plan to export data.

Grant Inworld impersonation access

Navigate to your newly created service account and open the Principals with access tab. Click Grant access and add the following principal:Assign it the Service Account Token Creator role (

roles/iam.serviceAccountTokenCreator). This allows the Inworld sync service to impersonate your service account for BigQuery operations.Grant BigQuery dataset permissions

Go to BigQuery and select the dataset you want to use with Inworld. On the dataset page, go to Share → Manage Permissions and click Add principal. Enter the email of the service account you created in Step 2 and assign the appropriate role:

- BigQuery Data Editor — required for exporting router exposure data to your dataset

- BigQuery Data Viewer — required for importing events from your source tables

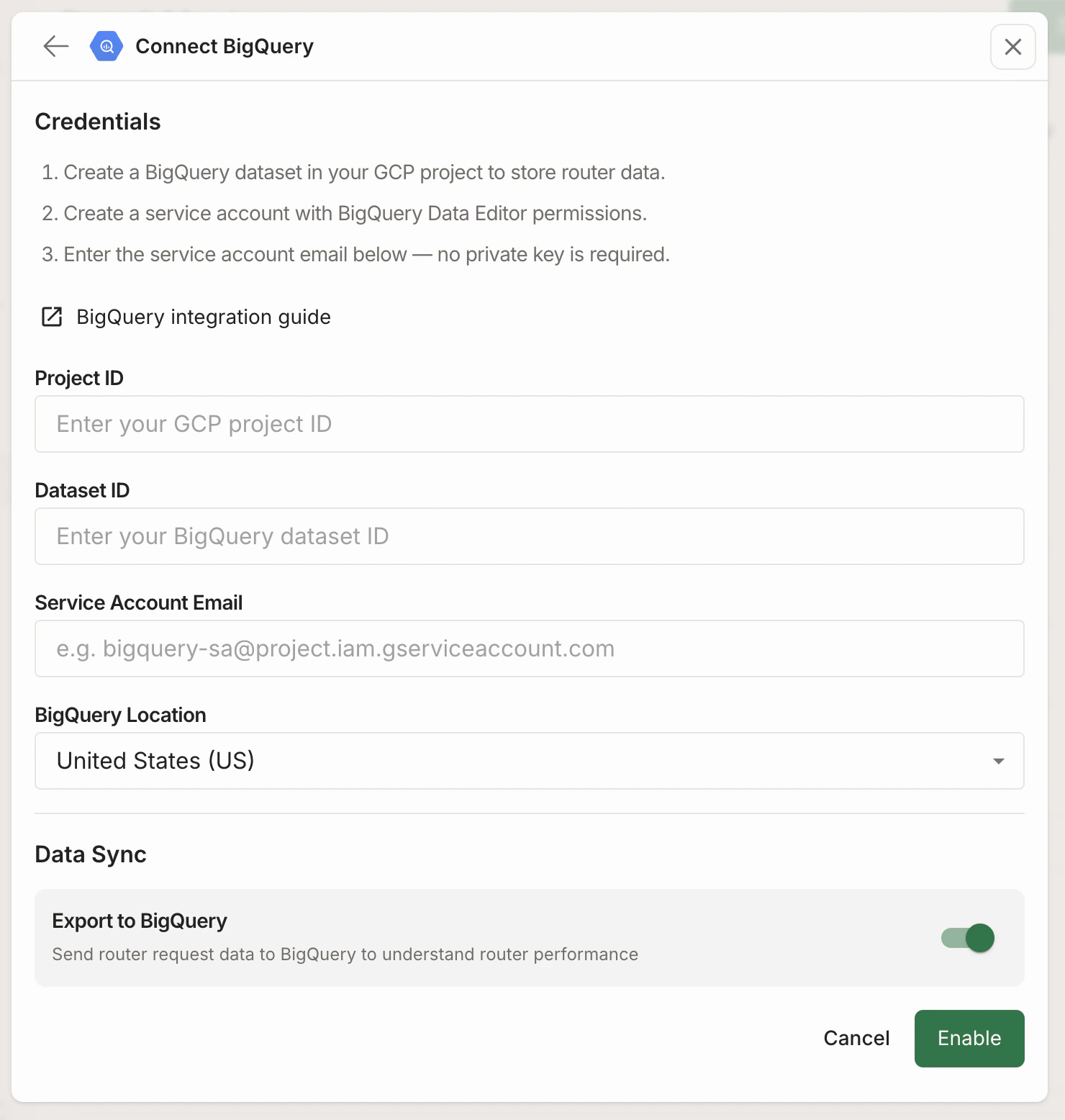

Both mode.Configure the integration in Portal

| Field | Value |

|---|---|

| Project ID | Your GCP project ID |

| Dataset ID | Your BigQuery dataset ID from Step 1 (used for export) |

| Service Account Email | The service account email from Step 2 |

| BigQuery Location | The region where your dataset is located |

Exporting to BigQuery

Toggle Export to BigQuery on in the Data Sync section of your integration settings. When enabled, router exposure data is automatically written to your BigQuery dataset.Make a request to your router

Call the Chat Completions API with your router, populating a unique user ID in theuser field:

user in the data export, so you can tie the specific request to the right user.

Analyze your data

The router exposure data will flow to your BigQuery dataset automatically (data syncs every ~8 hours). You will see a row for each router, route, and variant that a user was exposed to every hour. Each row will include:alias_id— Your router’s IDroute_id— The route that the user was routed tovariant_id— The variant that the user was exposed touser_id— The user that was exposed to this router, route, and varianttimestamp— The time of the most recent event in the hourly bucket in which the user was exposed to this router, route, and variant

Importing from BigQuery

Toggle Import from BigQuery on in the Data Sync section to start pulling your product events into Portal.Define events to import

In the Events to import section that appears after enabling import, click Add Event. For each event, you map columns from a BigQuery table to the fields Inworld expects:| Field | Description | Example |

|---|---|---|

| Event Name | A logical name for this event in Portal. | user_donation |

| Description | Optional description of when this event is triggered. | Fires when a user completes a donation |

| Dataset | The BigQuery dataset containing the source table. | user_data |

| Table Name | The BigQuery table to read events from. | donations |

| Event Field Name | The column that identifies the event type. | event_name |

| User ID Field Name | The column containing the user identifier. | user_id |

| Timestamp Field Name | The column with the event timestamp. | created_at |

| Event UUID Field Name | The column with a unique identifier per event row. | event_uuid |

Event names can only contain letters, numbers, underscores, and hyphens.

Schema requirements

Your BigQuery source table must meet these requirements:| Column | Type | Notes |

|---|---|---|

| Timestamp field | TIMESTAMP or DATETIME | Used to determine sync boundaries and event ordering |

| User ID field | STRING | Must match the user value passed in Chat Completions API requests for correlation with router exposures |

| Event UUID field | STRING | Must be unique per row to ensure idempotent imports |

user_message_count INT64, session_duration FLOAT64, or plan_name STRING. Properties are automatically classified by type (string, number, boolean) during import so they can be used in metric definitions.

The service account configured in Prerequisites must have BigQuery Data Viewer access to the specified dataset and table.

How import works

- Inworld reads from your BigQuery tables using the BigQuery Storage Read API for efficient data transfer.

- Events are synced approximately every 8 hours. Imports are incremental — only new rows since the last sync are fetched.

- User identity is matched using the User ID field. To correlate imported events with router exposures, ensure the same user identifier is passed as the

userfield in your Chat Completions API requests and in the User ID column of your BigQuery table. - Once events are imported, their numeric properties are automatically discovered and become available for metric configuration.